MS Thesis Project

Vision-and-Language Navigation for Autonomous Drone Search-and-Return in Urban Environments

Department of Computer Science and Engineering, University of California, Riverside

Abstract

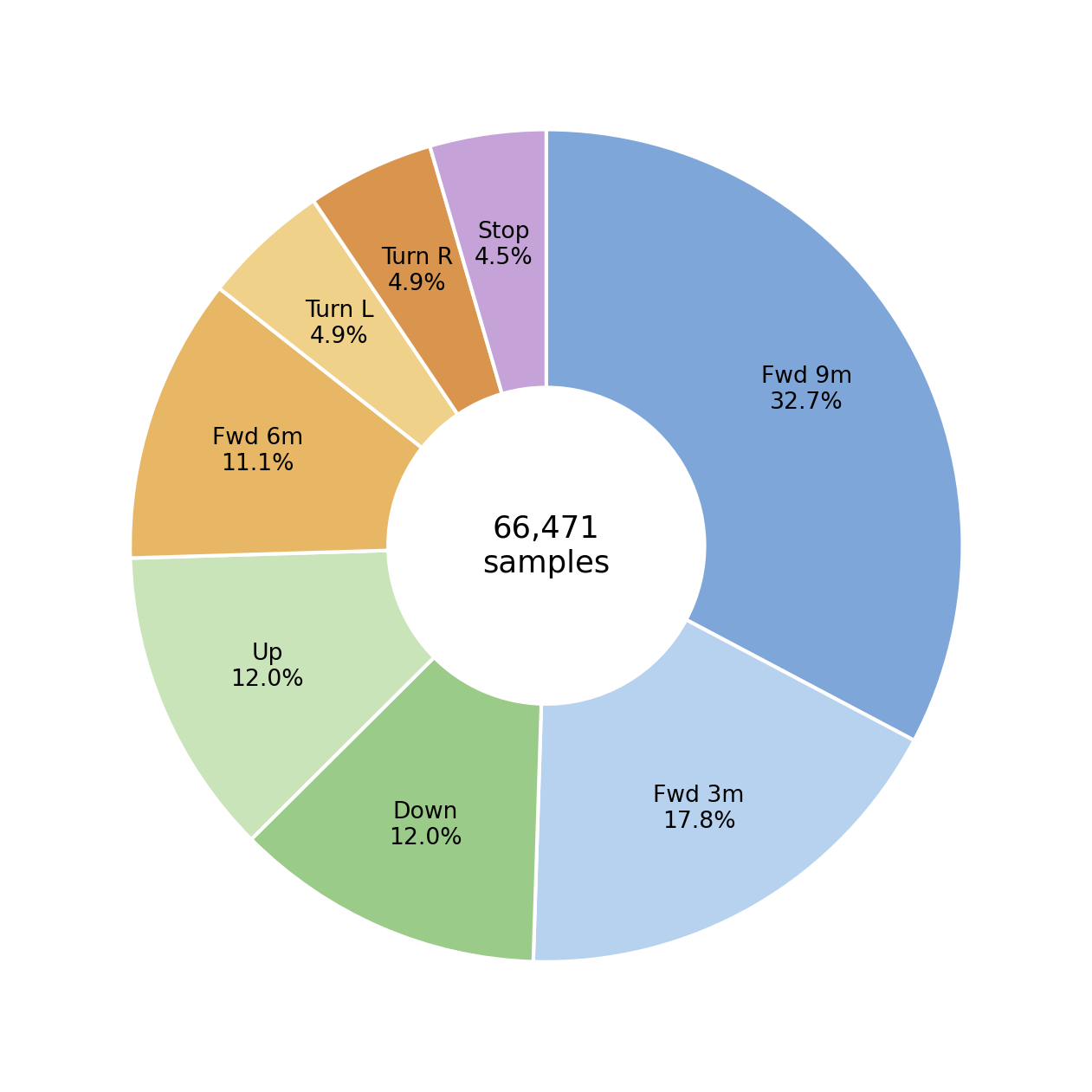

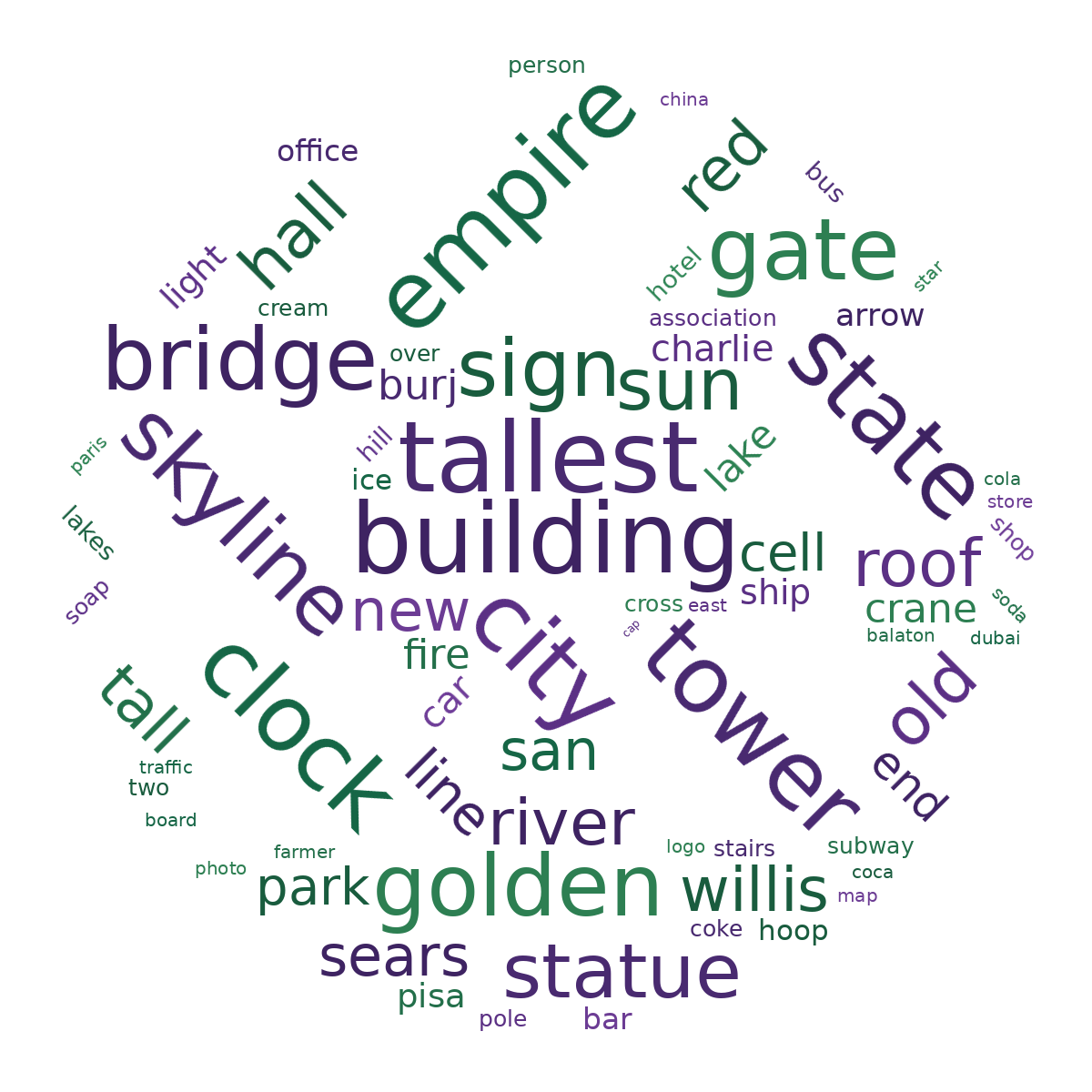

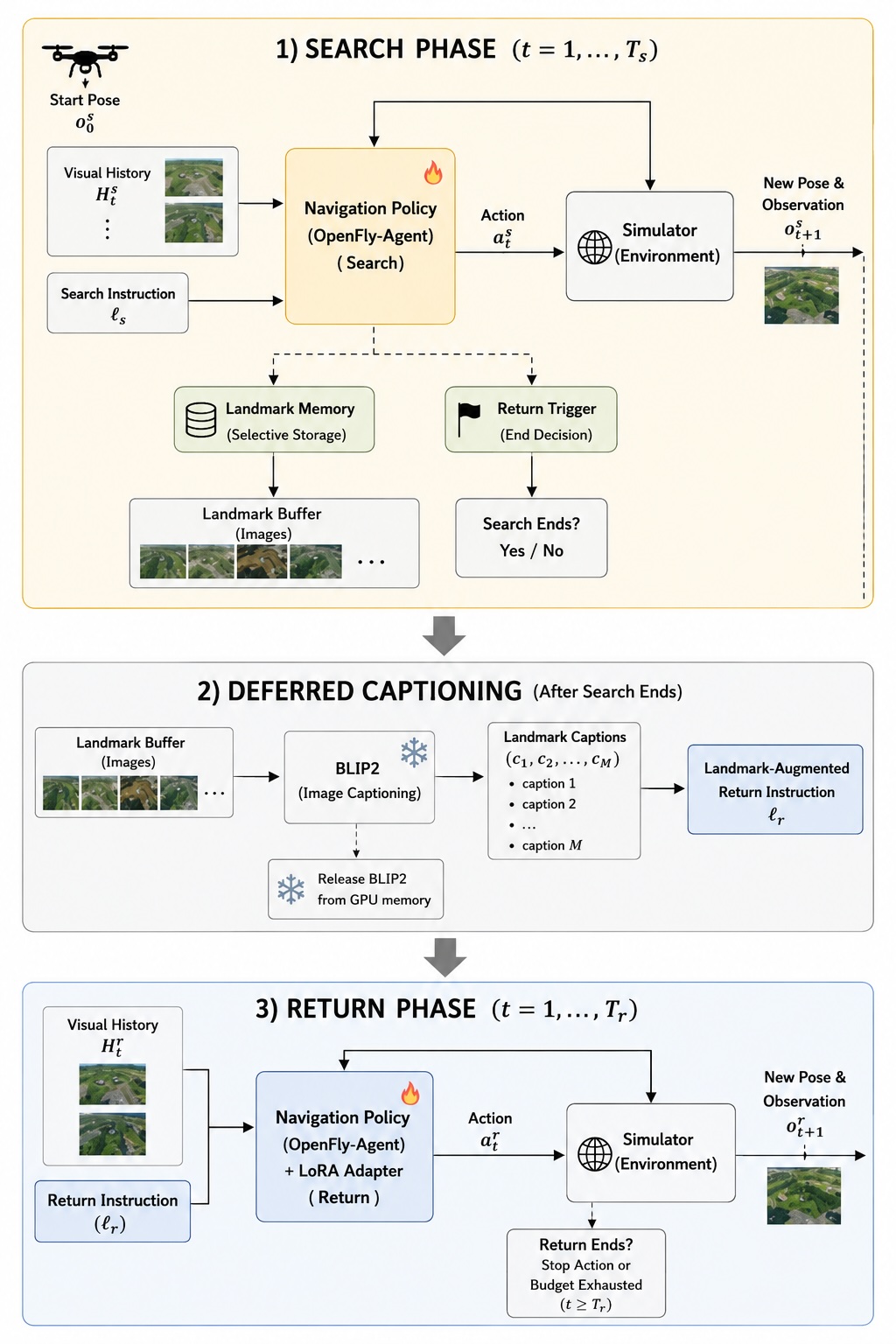

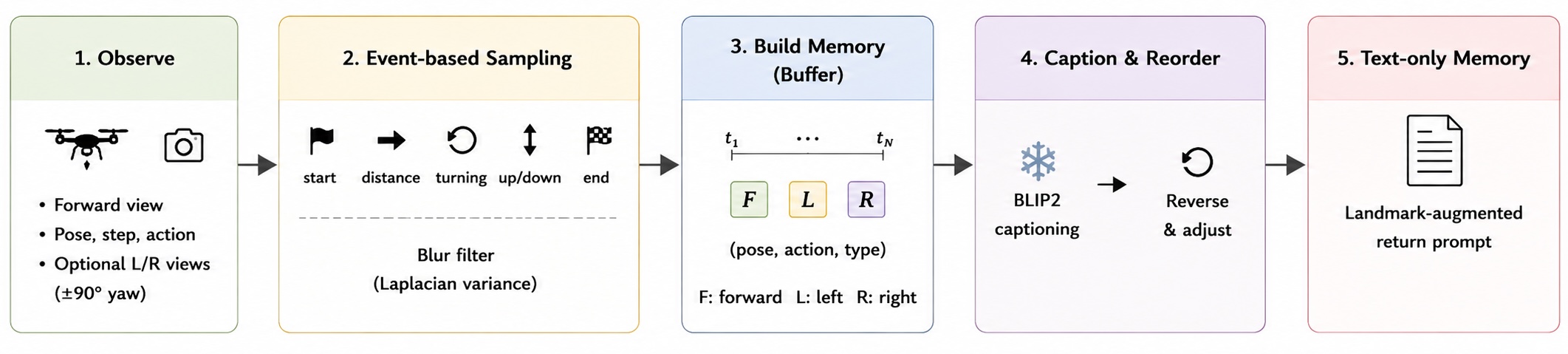

This study explores a search-and-return extension of aerial vision-and-language navigation (VLN), where a drone first follows a language instruction to search for a target and then returns to its starting point. The main challenge is that the return phase needs information collected and used from the search phase. This work builds a framework based on OpenFly-Agent with a return trigger, a landmark memory module, and a return policy with an optional LoRA adapter. The work also constructs SAR-Drone-VLN-3K, a generated dataset of search and return trajectories for training and analysis. Experiments show that the base OpenFly-Agent has limited return ability with landmark prompts, while the current LoRA adapter often moves near the start point but does not stop reliably. The results suggest that search-and-return navigation is more difficult than one-way aerial VLN problems and needs better memory design and decision-making.

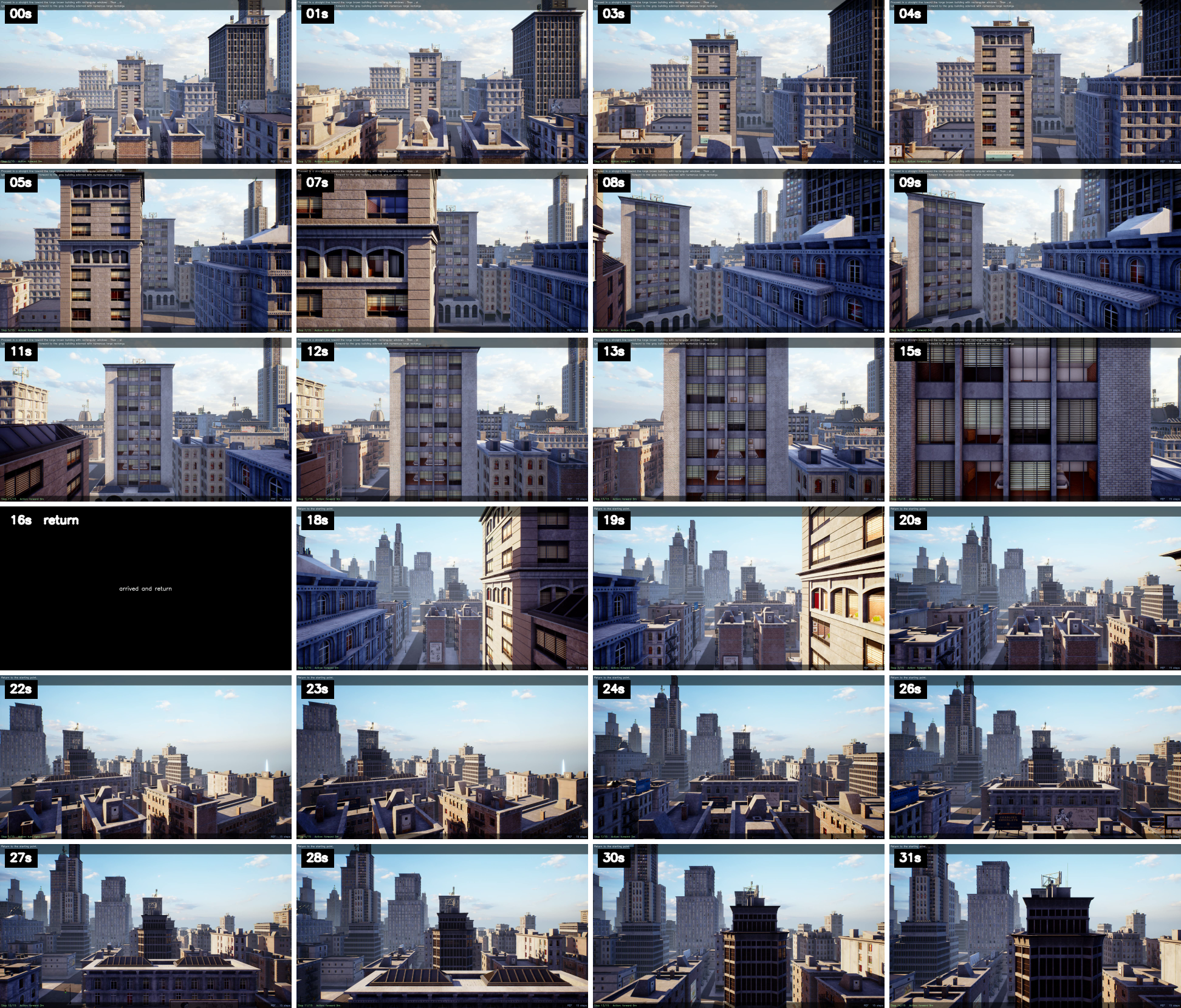

Search-and-Return Replays

Overview

The project extends one-way aerial VLN into a two-phase task. The agent searches for the target, records compact landmark memory, triggers return, and then navigates back to the original start position.

Key Figures

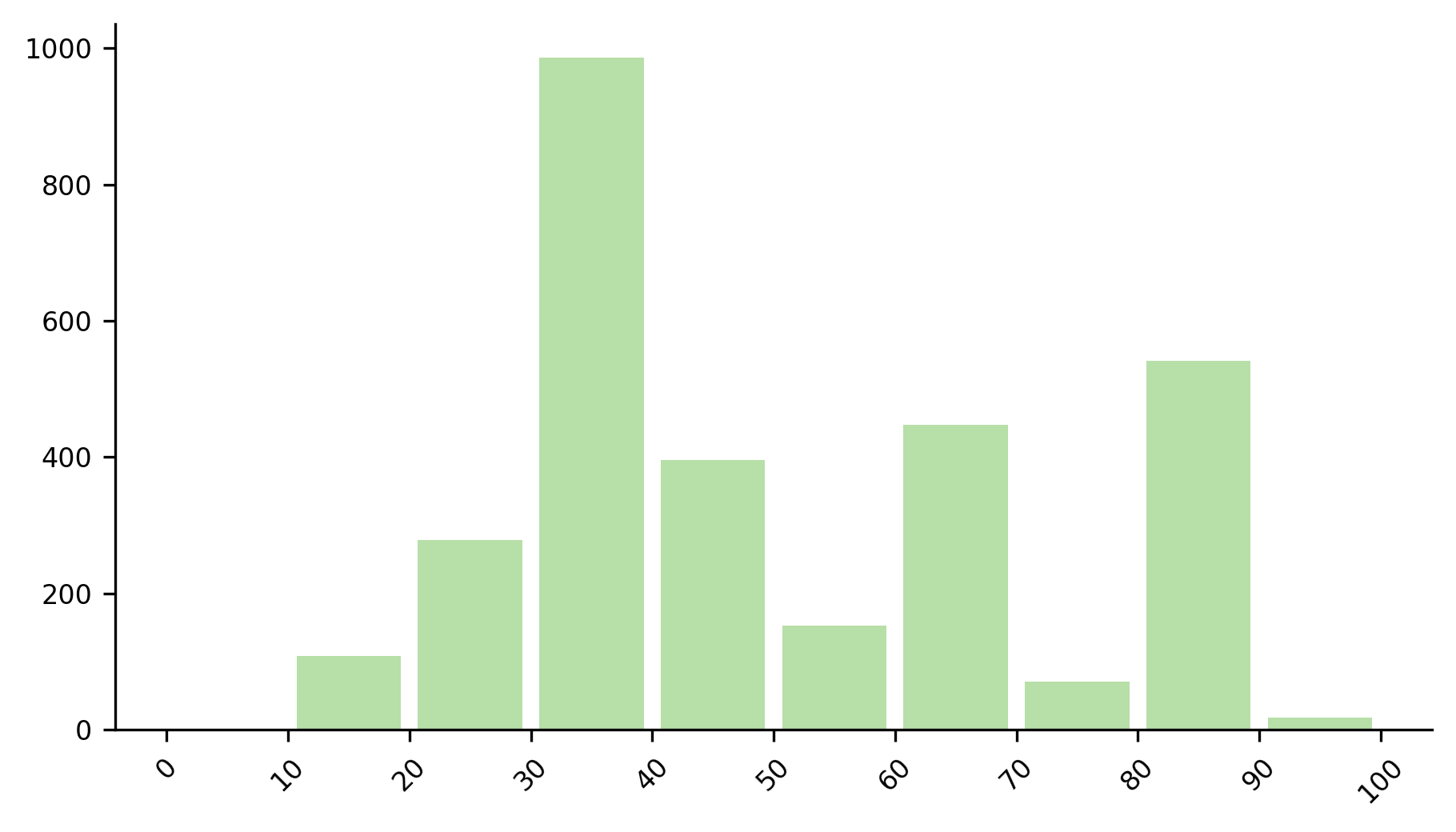

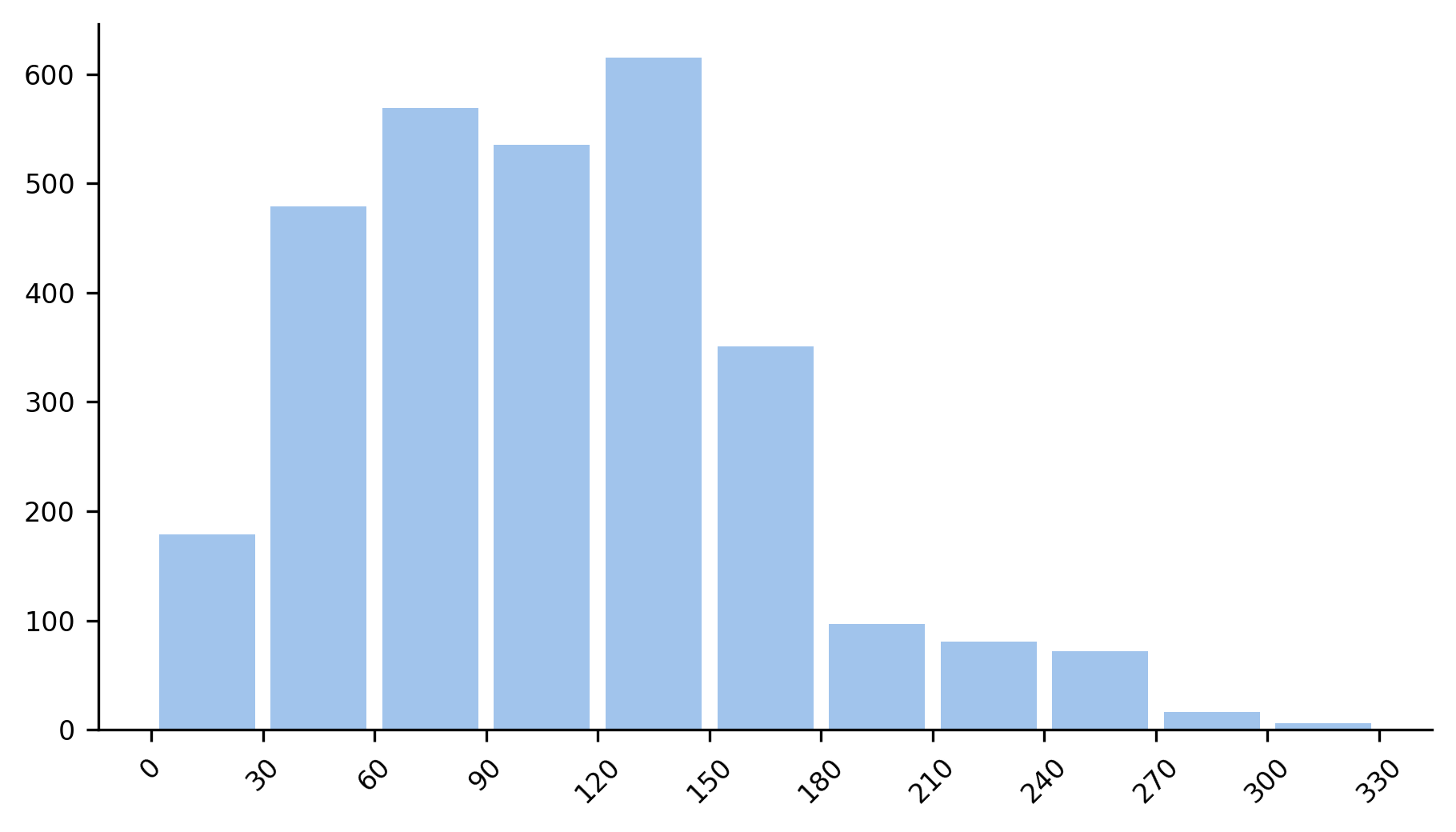

Dataset Analysis